# 2. Kubernetes1.14集群部署 - 二进制

# Kubernetes集群部署步骤

- 官方提供的三种部署方式

- Kubernetes平台环境规划

- 自签SSL证书

- Etcd数据库集群部署

- Node安装Docker

- 部署Kubernetes网络

- 部署Master组件

- 部署Node组件

- 部署一个测试示例

- 部署Web UI(Dashboard)

- 部署集群内部DNS解析服务(CoreDNS)

# 官方提供的三种部署方式

minikube

Minikube是一个工具,可以在本地快速运行一个单点的Kubernetes,仅用于尝试Kubernetes或日常开发的用户使用。

部署地址:https://kubernetes.io/docs/setup/minikube/

kubeadm

Kubeadm也是一个工具,提供kubeadm init和kubeadm join,用于快速部署Kubernetes集群。

部署地址:https://kubernetes.io/docs/reference/setup-tools/kubeadm/kubeadm/

二进制包

推荐,从官方下载发行版的二进制包,手动部署每个组件,组成Kubernetes集群。

下载地址:https://github.com/kubernetes/kubernetes/releases

# Kubernetes平台环境规划

# 单机Kubernetes平台架构

# 高可用Kubernetes平台架构

# 本文章的规划

目前会先构建单机Kubernetes平台架构,后面会将构建好的单机Kubernetes平台架构,扩容为高可用Kubernetes平台架构

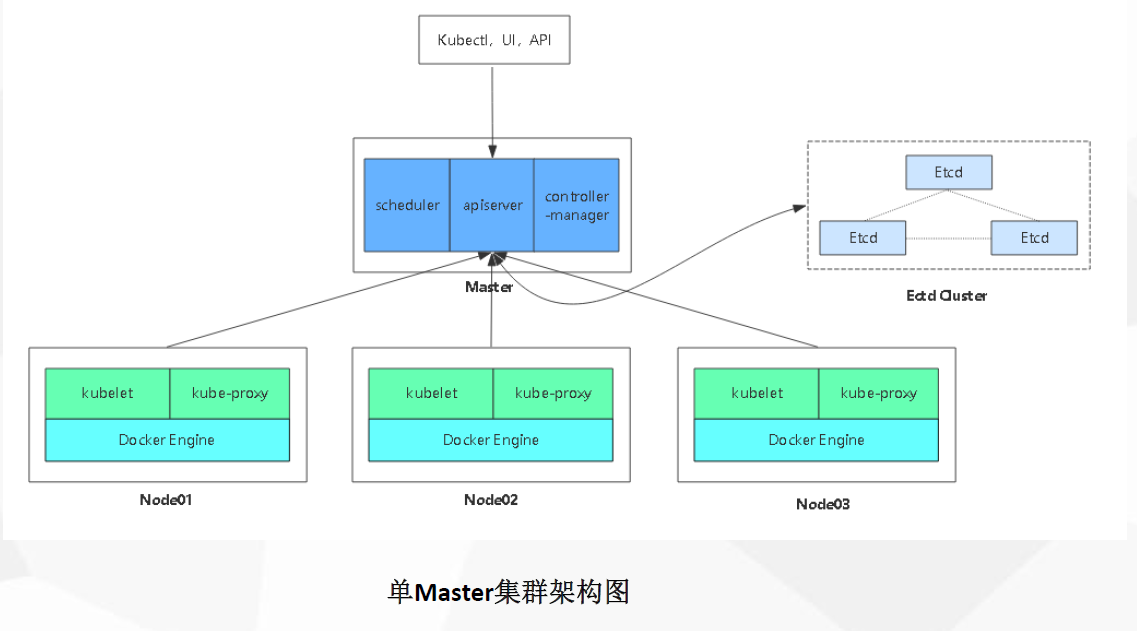

# 单机Kubernetes平台架构

- 192.168.0.141--masrer

- 4G,2P

- 192.168.0.142--nodc01

- 4G,2P

- 192.168.0.143--nodc02

- 4G,2P

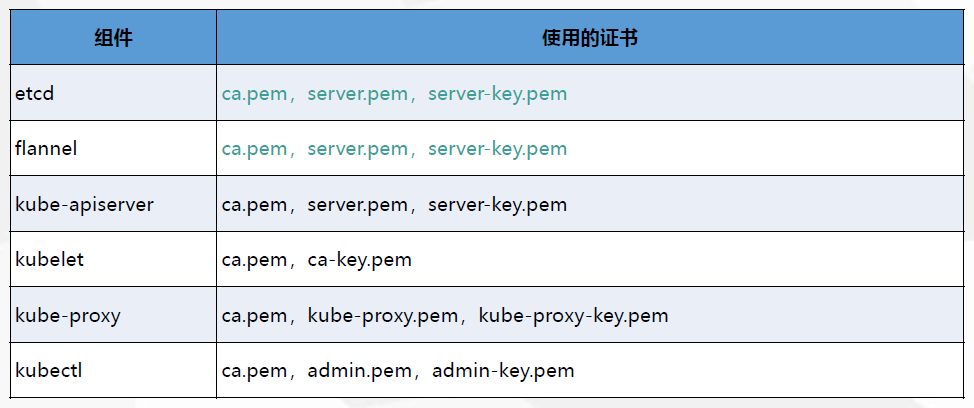

# 自签SSL证书

按着以上的证书需求图上,etcd跟k8s都需要证书

证书就在主master机上生成

# 创建证书目录

mkdir -p /k8s/cert/{k8s-cert,etcd-cert}

## 分别创建k8s跟etcd的证书目录,分类存储

# 同步时间

查看当前系统时间,请让系统时间保持正确

ntpdate time.windows.com

##虚拟机的时间总是不定,所以要同步时间

date -s '2019-03-24 13:09:30'

##如果同步出错,直接修改

# 生成etcd需要的证书

# 进入etcd证书目录

cd /k8s/cert/etcd-cert

# 安装cfssl命令

curl -L https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -o /usr/local/bin/cfssl

curl -L https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -o /usr/local/bin/cfssljson

curl -L https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -o /usr/local/bin/cfssl-certinfo

chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson /usr/local/bin/cfssl-certinfo

# 编写生成ca证书的脚本

vim etca-ca.sh

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json <<EOF

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

#-----------------------

cat > server-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"192.168.0.141",

"192.168.0.142",

"192.168.0.143"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

# 证书脚本重点注意信息

expiry:表示证书有效时间

hosts:有效的IP,只要是etcd的节点IP都要写进去

key:生成证书的算法

names:地区

# 使用ca.sh脚本生成ca证书

chmod +x etca-ca.sh

./etca-ca.sh

# 生成k8s需要的证书

# 进入k8s证书目录

cd /k8s/cert/k8s-cert/

# 编写生成证书的脚本

vim k8s.sh

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

#-----------------------

cat > server-csr.json <<EOF

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"192.168.0.141",

"192.168.0.40",

"192.168.0.41",

"192.168.0.42",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

#-----------------------

cat > admin-csr.json <<EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

#-----------------------

cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

# 证书脚本重点注意信息

expiry:表示证书有效时间

hosts:有效的IP,只要是master的节点IP都要写进去,为了之后的高可用,我就多加了几个IP,还有几个域名跟二个IP都不要删除,默认k8s有时会调用

key:生成证书的算法

names:地区,如果不熟悉最好不要修改names中的地区,因为有标记k8s

# 使用k8s.sh脚本生成证书

chmod +x k8s.sh

./k8s.sh

# Etcd数据库集群部署

etcd 是一个分布式一致性k-v存储系统,可用于服务注册发现与共享配置,具有以下优点。

- 简单 : 相比于晦涩难懂的paxos算法,etcd基于相对简单且易实现的raft算法实现一致性,并通过gRPC提供接口调用

- 安全:支持TLS通信,并可以针对不同的用户进行对key的读写控制

- 高性能:10,000 /秒的写性能

# etcd下载

https://github.com/etcd-io/etcd/releases

# etcd版本

这边是用etcd3.3.10来部署的

# etcd集群节点

- 192.168.0.141

- 192.168.0.142

- 192.168.0.143

# etcd主要文件

etcd文件 ##启动etcd的主要程序

etcdctl文件 ##是etcd管理客户端的命令

/var/log/messages ##日志文件

# 192.168.0.141机上操作

# 进入目录并解压并进入

mkdir /k8s/soft

cd /k8s/soft

tar -zxf etcd-v3.3.10-linux-amd64.tar.gz

cd etcd-v3.3.10-linux-amd64

# 创建目录

mkdir -p /usr/local/etcd/{bin,conf,ssl}

## bin:存放可执行文件

## conf:存放配置文件

## ssl:存放证书文件

# 复制文件到etcd的bin目录中

cp -a /k8s/soft/etcd-v3.3.10-linux-amd64/etcd* /usr/local/etcd/bin/

复制证书到etcd的ssh目录中

cp -a /k8s/cert/etcd-cert/{ca,server,server-key}.pem /usr/local/etcd/ssl/

# 编写部署脚本

vim etcd.sh

#!/bin/bash

# example: ./etcd.sh etcd01 192.168.1.10 etcd02=https://192.168.1.11:2380,etcd03=https://192.168.1.12:2380

ETCD_NAME=$1

ETCD_IP=$2

ETCD_CLUSTER=$3

WORK_DIR=/usr/local/etcd

cat <<EOF >$WORK_DIR/conf/etcd

#[Member]

ETCD_NAME="${ETCD_NAME}"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_LISTEN_CLIENT_URLS="https://${ETCD_IP}:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://${ETCD_IP}:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://${ETCD_IP}:2380,${ETCD_CLUSTER}"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

cat <<EOF >/usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=${WORK_DIR}/conf/etcd

ExecStart=${WORK_DIR}/bin/etcd \

--name=\${ETCD_NAME} \

--data-dir=\${ETCD_DATA_DIR} \

--listen-peer-urls=\${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=\${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=\${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=\${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=\${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=\${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=${WORK_DIR}/ssl/server.pem \

--key-file=${WORK_DIR}/ssl/server-key.pem \

--peer-cert-file=${WORK_DIR}/ssl/server.pem \

--peer-key-file=${WORK_DIR}/ssl/server-key.pem \

--trusted-ca-file=${WORK_DIR}/ssl/ca.pem \

--peer-trusted-ca-file=${WORK_DIR}/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable etcd

systemctl restart etcd

# 执行脚本

chmod +x etcd.sh

./etcd.sh etcd01 192.168.0.141 etcd02=https://192.168.0.142:2380,etcd03=https://192.168.0.143:2380

## etcd01代表节点名,跟IP,后面代表其他节点的IP

## 端口在上面生成脚本配置

## 2380端口:集群通信端口

## 2379端口:数据端口

# 发送etcd目录中另两个节点中

scp -r /usr/local/etcd/ [email protected]:/usr/local/

scp -r /usr/local/etcd/ [email protected]:/usr/local/

scp /usr/lib/systemd/system/etcd.service [email protected]:/usr/lib/systemd/system/

scp /usr/lib/systemd/system/etcd.service [email protected]:/usr/lib/systemd/system/

# 启动

systemctl daemon-reload

systemctl enable etcd

systemctl start etcd

# 查看日志

tail -100f /var/log/messages

##会发现日志一直在输出找不到另外二个节点,剩下的就把另外二个节点启动就行了

# 192.168.0.142机上操作

# 修改etcd配置文件

vim /usr/local/etcd/conf/etcd

#[Member]

ETCD_NAME="etcd02"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.0.142:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.0.142:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.0.142:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.0.142:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.0.141:2380,etcd02=https://192.168.0.142:2380,etcd03=https://192.168.0.143:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

##除了最后第三行,把节点名跟IP修改成当前节点中IP,最后第三行的不用修改,是用于配置集群

# 启动

systemctl daemon-reload

systemctl enable etcd

systemctl start etcd

# 查看日志

tail -100f /var/log/messages

##会发现日志一直在输出找不到另外二个节点,剩下的就把另外二个节点启动就行了

# 192.168.0.143机上操作

# 修改etcd配置文件

vim /usr/local/etcd/conf/etcd

#[Member]

ETCD_NAME="etcd03"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.0.143:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.0.143:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.0.143:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.0.143:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.0.141:2380,etcd02=https://192.168.0.142:2380,etcd03=https://192.168.0.143:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

##除了最后第三行,把节点名跟IP修改成当前节点中IP,最后第三行的不用修改,是用于配置集群

# 启动

systemctl daemon-reload

systemctl enable etcd

systemctl start etcd

# 查看日志

tail -100f /var/log/messages

##会发现日志一直在输出找不到另外二个节点,剩下的就把另外二个节点启动就行了

# 检测集群是否完整

/usr/local/etcd/bin/etcdctl --ca-file=/usr/local/etcd/ssl/ca.pem --cert-file=/usr/local/etcd/ssl/server.pem --key-file=/usr/local/etcd/ssl/server-key.pem --endpoints="https://192.168.0.141:2379,https://192.168.0.142,https://192.168.0.143:2379" cluster-health

结果:

member 4744090d32d62b14 is healthy: got healthy result from https://192.168.0.142:2379

member cad5c53fd12ea4d7 is healthy: got healthy result from https://192.168.0.143:2379

member ff44f0163fd2a515 is healthy: got healthy result from https://192.168.0.141:2379

cluster is healthy

# 错误总结

遇到过一个错误,启动一个节点,主节点一直报该节点证书错误,到最后发现是服务器时间不对,把时间修改回来就可以了

# Node安装Docker

在node节点中安装docker

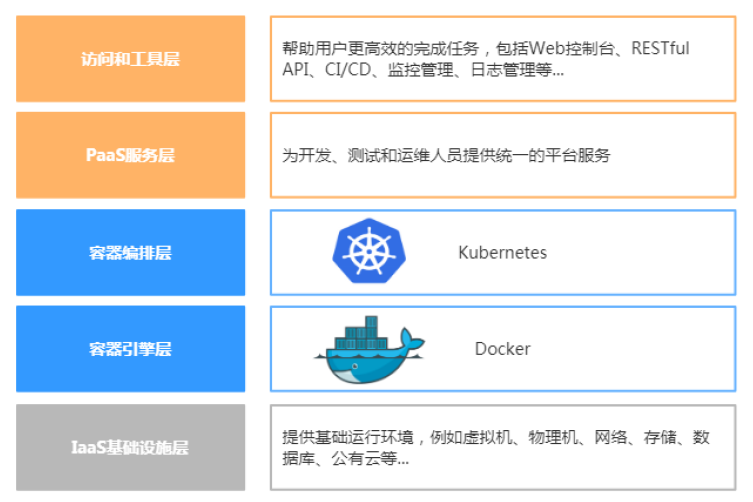

# k8s整体分层图

# node节点

- 192.168.0.142

- 192.168.0.143

# 安装docker

以下的步骤请在node的所有节点上操作

# 安装依赖包

yum install -y yum-utils device-mapper-persistent-data lvm2

# 配置docker的yum源

需要配置docker官方的yum源来保证拉取的是官方最新的安装包

yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

# 安装docker

yum -y install docker-ce

# 安装daoclou加速器

网址:www.daocloud.io/mirror (opens new window)

curl -sSL https://get.daocloud.io/daotools/set_mirror.sh | sh -s http://f1361db2.m.daocloud.io

##如果报错,很有可能是本机的时间不同步当前时间,安装的,是在/etc/docker/daemon.json文件中,这个文件是docker默认读取的文件

# 启动docker

systemctl start docker

# 部署Kubernetes网络

# Kubernetes网络模型(CNI)

- Container Network Interface(CNI):

- 容器网络接口,Google和CoreOS主导。

- Kubernetes网络模型设计基本要求:

- 一个Pod一个IP

- 每个Pod独立IP,Pod内所有容器共享网络(同一个IP)

- 所有容器都可以与所有其他容器通信

- 所有节点都可以与所有容器通信

# Kubernetes网络模型的实现

主要是由以下几种技术实现

一般企业中常用的就是Flannel跟Calico以及contiv

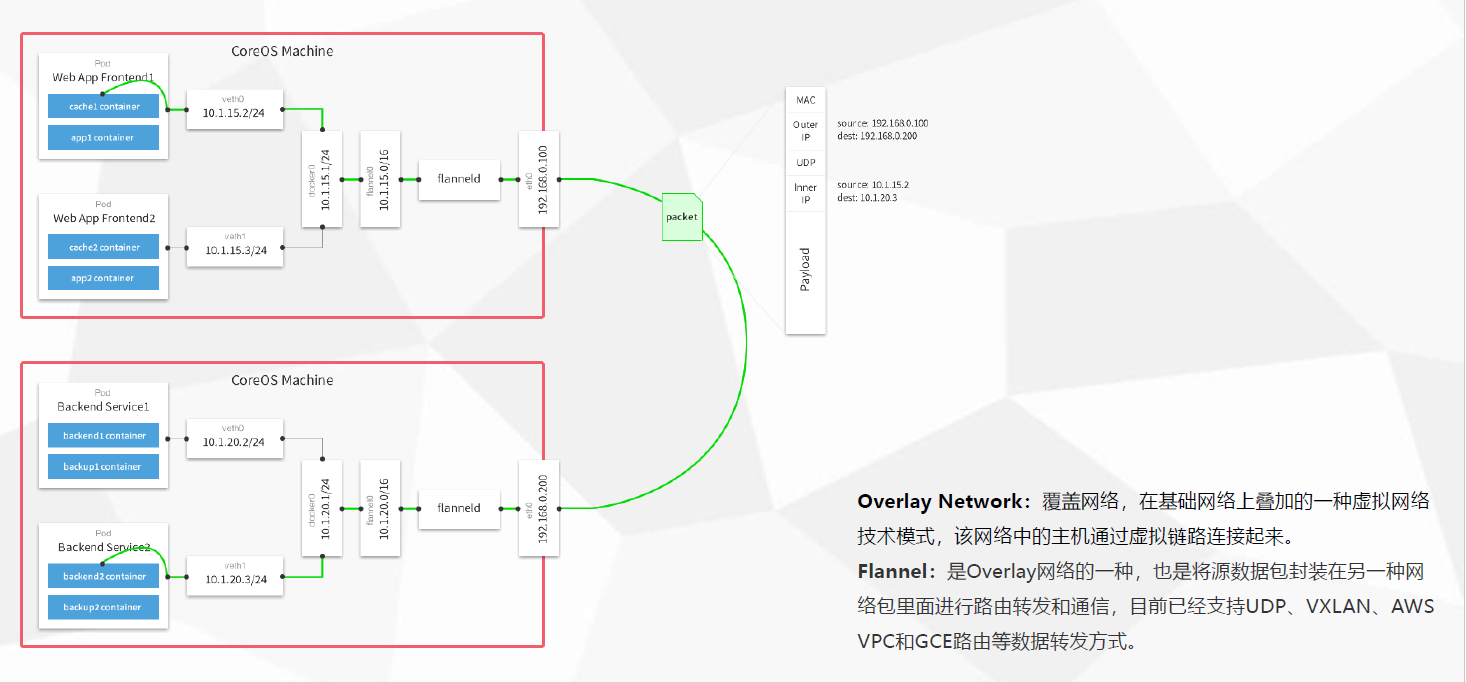

# Flannel网络插件 - (百台下网络方案)

- Flannel是CoreOS团队针对Kubernetes设计的一个网络规划服务,简单来说,它的功能是让集群中的不同节点主机创建的Docker容器都具有全集群唯一的虚拟IP地址。

- 在默认的Docker配置中,每个节点上的Docker服务会分别负责所在节点容器的IP分配。这样导致的一个问题是,不同节点上容器可能获得相同的内外IP地址。并使这些容器之间能够之间通过IP地址相互找到,也就是相互ping通。

- Flannel的设计目的就是为集群中的所有节点重新规划IP地址的使用规则,从而使得不同节点上的容器能够获得“同属一个内网”且”不重复的”IP地址,并让属于不同节点上的容器能够直接通过内网IP通信。

- Flannel实质上是一种“覆盖网络(overlaynetwork)”,也就是将TCP数据包装在另一种网络包里面进行路由转发和通信,目前已经支持udp、vxlan、host-gw、aws-vpc、gce和alloc路由等数据转发方式,默认的节点间数据通信方式是UDP转发

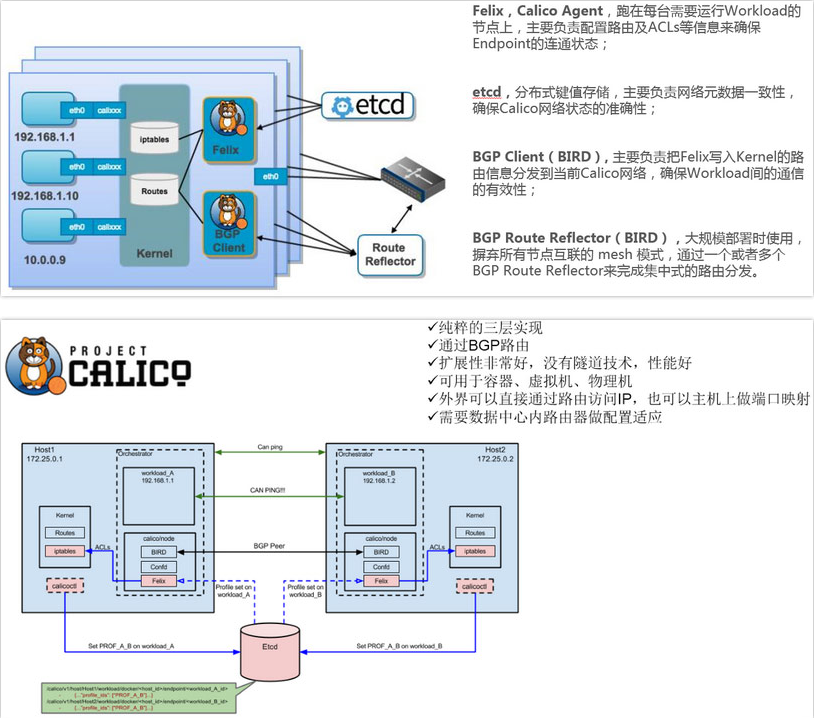

# Calico网络插件 - (上百台网络方案)

- Calico是一个纯3层的数据中心网络方案,而且无缝集成像OpenStack这种IaaS云架构,能够提供可控的VM、容器、裸机之间的IP通信。Calico不使用重叠网络比如flannel和libnetwork重叠网络驱动,它是一个纯三层的方法,使用虚拟路由代替虚拟交换,每一台虚拟路由通过BGP协议传播可达信息(路由)到剩余数据中心。

- Calico在每一个计算节点利用Linux Kernel实现了一个高效的vRouter来负责数据转发,而每个vRouter通过BGP协议负责把自己上运行的workload的路由信息像整个Calico网络内传播——小规模部署可以直接互联,大规模下可通过指定的BGP route reflector来完成。

- Calico节点组网可以直接利用数据中心的网络结构(无论是L2或者L3),不需要额外的NAT,隧道或者Overlay Network。

- Calico基于iptables还提供了丰富而灵活的网络Policy,保证通过各个节点上的ACLs来提供Workload的多租户隔离、安全组以及其他可达性限制等功能。

# 部署Kubernetes网络-Flannel

这里网络模型选择的是Flannel模型,因Flannel使用起来相比Calico简单中,但是Flannel一般用于集群机器百台内的网络模型,超过百台建议使用Calico

Flannel也是官方推荐的网络模型

Overlay Network:覆盖网络,在基础网络上叠加的一种虚拟网络技术模式,该网络中的主机通过虚拟链路连接起来。 Flannel:是Overlay网络的一种,也是将源数据包封装在另一种网络包里面进行路由转发和通信,目前已经支持UDP、VXLAN、Host-GW、AWS VPC和GCE路由等数据转发方式。

# 创建一个子网写到etcd中

/usr/local/etcd/bin/etcdctl --ca-file=/usr/local/etcd/ssl/ca.pem --cert-file=/usr/local/etcd/ssl/server.pem --key-file=/usr/local/etcd/ssl/server-key.pem --endpoints="https://192.168.0.141:2379,https://192.168.0.142:2379,https://192.168.0.143:2379" set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

## set 创建一个子网

## Network:分配给docker的网段

## Backend:使用flannel网络模式中那个数据转发方式

## 全局域网通信建议使用:Host-GW

## 多网络通信建议使用:VXLAN

# 查看子网有没有生成

/usr/local/etcd/bin/etcdctl --ca-file=/usr/local/etcd/ssh/ca.pem --cert-file=/usr/local/etcd/ssh/server.pem --key-file=/usr/local/etcd/ssh/server-key.pem --endpoints="https://192.168.0.141:2379,https://192.168.0.142:2379,https://192.168.0.143:2379" get /coreos.com/network/config

结果:

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

# 下载flannel二进制包

https://github.com/coreos/flannel/releases

- 在master节点也是可以部署flannel网络的,不过是可选的

- 需要在node节点上部署:

- 192.168.0.142

- 192.168.0.143

# 192.168.0.142机上操作

# 进入目录并解压并进入

mkdir /k8s

cd /k8s/

tar -zxf flannel-v0.10.0-linux-amd64.tar.gz

# 创建目录

mkdir -p /usr/local/kubernetes/{bin,conf,ssl}

# 复制文件到kubernetes的bin目录中

cp flanneld mk-docker-opts.sh /usr/local/kubernetes/bin/

# 编写部署脚本

vim flanneld.sh

#!/bin/bash

ETCD_ENDPOINTS=${1:-"http://127.0.0.1:2379"}

cat <<EOF >/usr/local/kubernetes/conf/flanneld

FLANNEL_OPTIONS="--etcd-endpoints=${ETCD_ENDPOINTS} \

-etcd-cafile=/usr/local/etcd/ssl/ca.pem \

-etcd-certfile=/usr/local/etcd/ssl/server.pem \

-etcd-keyfile=/usr/local/etcd/ssl/server-key.pem"

EOF

cat <<EOF >/usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/usr/local/kubernetes/conf/flanneld

ExecStart=/usr/local/kubernetes/bin/flanneld --ip-masq \$FLANNEL_OPTIONS

ExecStartPost=/usr/local/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

cat <<EOF >/usr/lib/systemd/system/docker.service

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd \$DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP \$MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable flanneld

systemctl restart flanneld

systemctl restart docker

## /run/flannel/:这个目录存放着刚才创建的子网相关文件

# 执行脚本

chmod +x flanneld.sh

./flanneld.sh https://192.168.0.141:2379,https://192.168.0.142:2379,https://192.168.0.143:2379

##执行脚本时要带有etcd集群的IP

# 执行脚本后会生成三个配置文件

cat /usr/local/kubernetes/conf/flanneld

FLANNEL_OPTIONS="--etcd-endpoints=https://192.168.0.141:2379,https://192.168.0.142:2379,https://192.168.0.143:2379 -etcd-cafile=/usr/local/etcd/ssl/ca.pem -etcd-certfile=/usr/local/etcd/ssl/server.pem -etcd-keyfile=/usr/local/etcd/ssl/server-key.pem"

====================================================================

cat /usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/usr/local/kubernetes/conf/flanneld

ExecStart=/usr/local/kubernetes/bin/flanneld --ip-masq $FLANNEL_OPTIONS

ExecStartPost=/usr/local/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

====================================================================

cat /usr/lib/systemd/system/docker.service

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target

# 启动flanneld网络

systemctl daemon-reload

systemctl enable flanneld

systemctl start flanneld

systemctl restart docker

# 查看docker是否是用flanneld网络

ifconfig

docker0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 172.17.56.1 netmask 255.255.255.0 broadcast 172.17.56.255

ether 02:42:15:ef:ba:64 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.56.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::9ce8:8eff:fe50:a1b7 prefixlen 64 scopeid 0x20

ether 9e:e8:8e:50:a1:b7 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 7 overruns 0 carrier 0 collisions 0

##docker0的网卡跟flannel.1的网卡是在同一网段上,就证明docker使用了flanneld网络

# 发送flannel到另一个node中

scp -r /usr/local/kubernetes/ [email protected]:/usr/local/

scp /usr/lib/systemd/system/{docker,flanneld}.service [email protected]:/usr/lib/systemd/system/

# 192.168.0.143机上操作

在192.168.0.143上直接启动不用修改配置

# 启动flanneld网络

systemctl daemon-reload

systemctl enable flanneld

systemctl start flanneld

systemctl restart docker

# 查看docker是否是用flanneld网络

ifconfig

docker0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 172.17.10.1 netmask 255.255.255.0 broadcast 172.17.10.255

ether 02:42:c2:c3:04:f9 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.10.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::9c52:51ff:feb0:21e9 prefixlen 64 scopeid 0x20

ether 9e:52:51:b0:21:e9 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 7 overruns 0 carrier 0 collisions 0

##docker0的网卡跟flannel.1的网卡是在同一网段上,就证明docker使用了flanneld网络

# 测试node节点的容器是否相通

在二个node节点都创建一个容器,并相互ping一下

docker container run -it busybox

##在node节点的机器上使用

ifconfig

##查看容器中的IP

ping 容器IP

##查看到的IP在另的node节点的容器测试一下是否相通

# 部署Master组件

在部署Kubernetes之前一定要确保etcd、flannel、docker是正常工作的,否则先解决问题再继

需要部署三个组件

- kube-apiserver

- kube-controller-manager

- kube-scheduler

下载二进制包:https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG-1.12.md 下载这个包(kubernetes-server-linux-amd64.tar.gz)就够了,包含了所需的所有组件。

# kube-apiserver组件的部署

# 进入目录并解压并进入

cd /k8s/soft

tar -zxf kubernetes-server-linux-amd64.tar.gz

cd kubernetes

# 创建目录

mkdir -p /usr/local/kubernetes/{bin,conf,ssl,logs}

# 复制文件到kubernetes的bin目录中

cd server/bin/

cp kube-apiserver kube-controller-manager kubectl kube-scheduler /usr/local/kubernetes/bin/

ln -s /usr/local/kubernetes/bin/kubectl /usr/bin/

# 编写部署脚本

vim apiserver.sh

#!/bin/bash

MASTER_ADDRESS=$1

ETCD_SERVERS=$2

cat <<EOF >/usr/local/kubernetes/conf/kube-apiserver

KUBE_APISERVER_OPTS="--logtostderr=false \\

--log-dir=/usr/local/kubernetes/logs \\

--v=4 \\

--etcd-servers=${ETCD_SERVERS} \\

--bind-address=${MASTER_ADDRESS} \\

--secure-port=6443 \\

--advertise-address=${MASTER_ADDRESS} \\

--allow-privileged=true \\

--service-cluster-ip-range=10.0.0.0/24 \\

--service-node-port-range=30000-50000 \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\

--authorization-mode=RBAC,Node \\

--kubelet-https=true \\

--enable-bootstrap-token-auth \\

--token-auth-file=/usr/local/kubernetes/conf/token.csv \\

--tls-cert-file=/usr/local/kubernetes/ssl/server.pem \\

--tls-private-key-file=/usr/local/kubernetes/ssl/server-key.pem \\

--client-ca-file=/usr/local/kubernetes/ssl/ca.pem \\

--service-account-key-file=/usr/local/kubernetes/ssl/ca-key.pem \\

--etcd-cafile=/usr/local/etcd/ssl/ca.pem \\

--etcd-certfile=/usr/local/etcd/ssl/server.pem \\

--etcd-keyfile=/usr/local/etcd/ssl/server-key.pem"

EOF

cat <<EOF >/usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/usr/local/kubernetes/conf/kube-apiserver

ExecStart=/usr/local/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-apiserver

systemctl restart kube-apiserver

执行脚本

chmod +x apiserver.sh

./apiserver.sh 192.168.0.141 https://192.168.0.141:2379,https://192.168.0.142:2379,https://192.168.0.143:2379

复制证书到kubernetes的ssh目录中

cd /k8s/cert/k8s-cert/

cp ca.pem ca-key.pem server.pem server-key.pem /usr/local/kubernetes/ssl/

# 生成token.csv验证文件

head -c 16 /dev/urandom | od -An -t x | tr -d ' '

##生成密钥

echo '0fb61c46f8991b718eb38d27b605b008,kubelet-bootstrap,10001,"system:kubelet-bootstrap"' > /usr/local/kubernetes/conf/token.csv

# 0fb61c46f8991b718eb38d27b605b008:密钥

# kubelet-bootstrap:用户

# 10001:用户组

# system:kubelet-bootstrap:加入k8s集群

# 启动kube-apiserver

systemctl daemon-reload

systemctl enable kube-apiserver

systemctl start kube-apiserver

端口:8080,6443

# Kube-controller-manager组件的部署

# 编写部署脚本

cd /k8s/soft/kubernetes/server/bin/

vim controller-manager.sh

#!/bin/bash

MASTER_ADDRESS=$1

cat <<EOF >/usr/local/kubernetes/conf/kube-controller-manager

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=false \\

--log-dir=/usr/local/kubernetes/logs \\

--v=4 \\

--master=${MASTER_ADDRESS}:8080 \\

--leader-elect=true \\

--address=127.0.0.1 \\

--service-cluster-ip-range=10.0.0.0/24 \\

--cluster-name=kubernetes \\

--cluster-signing-cert-file=/usr/local/kubernetes/ssl/ca.pem \\

--cluster-signing-key-file=/usr/local/kubernetes/ssl/ca-key.pem \\

--root-ca-file=/usr/local/kubernetes/ssl/ca.pem \\

--service-account-private-key-file=/usr/local/kubernetes/ssl/ca-key.pem \\

--experimental-cluster-signing-duration=87600h0m0s"

EOF

cat <<EOF >/usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/usr/local/kubernetes/conf/kube-controller-manager

ExecStart=/usr/local/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl restart kube-controller-manager

# 执行脚本

chmod +x controller-manager.sh

./controller-manager.sh 127.0.0.1

## 启动需要绑定apiserver的8080端口,所以要确定apiserver启动起来后才能启动controller-manager

# 启动Kube-controller-manager

systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl start kube-controller-manager

# kube-scheduler组件的部署

# 编写部署脚本

cd /k8s/soft/kubernetes/server/bin/

vim scheduler.sh

#!/bin/bash

MASTER_ADDRESS=$1

cat <<EOF >/usr/local/kubernetes/conf/kube-scheduler

KUBE_SCHEDULER_OPTS="--logtostderr=false \\

--log-dir=/usr/local/kubernetes/logs \\

--v=4 \\

--master=${MASTER_ADDRESS}:8080 \\

--leader-elect"

EOF

cat <<EOF >/usr/lib/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/usr/local/kubernetes/conf/kube-scheduler

ExecStart=/usr/local/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-scheduler

systemctl restart kube-scheduler

# 执行脚本

chmod +x scheduler.sh

./scheduler.sh 127.0.0.1

## 启动需要绑定apiserver的8080端口,所以要确定apiserver启动起来后才能启动controller-manager

# 启动kube-scheduler

systemctl daemon-reload

systemctl enable kube-scheduler

systemctl start kube-scheduler

# 查看当前集群节点的状态

kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

# 部署Node组件

# 需要部署二个组件

- kubelet

- kube-proxy

# 以下操作是在Master节点上操作

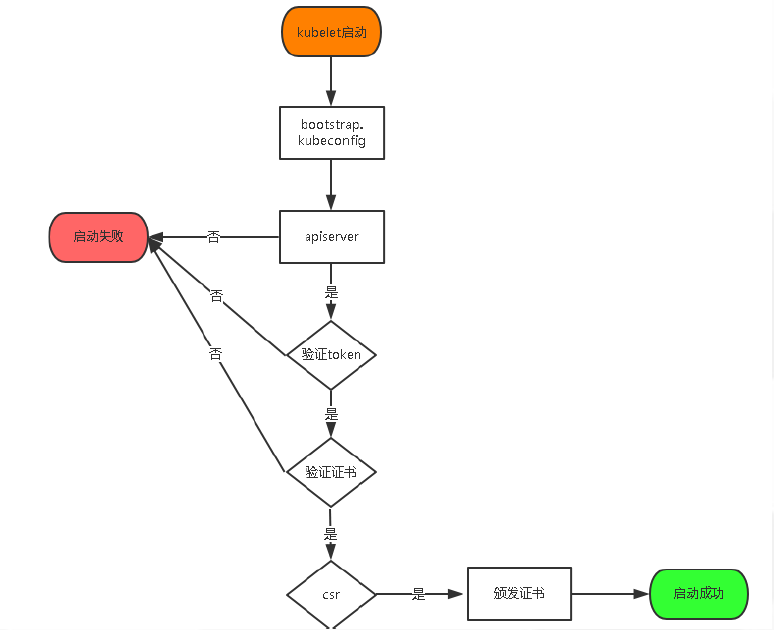

# 绑定到系统集群角色

将kubelet-bootstrap用户绑定到系统集群角色

kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

## 为上面生成的token.csv验证文件中的用户绑定到集群角色中

# 编写生成kubeconfig文件的脚本

cd /k8s/soft/kubernetes/server/bin/

vim kubeconfig.sh

#!/bin/bash

APISERVER=$1

SSL_DIR=$2

BOOTSTRAP_TOKEN=cd38b57ef30a4f4009fde99e9603dcb8

# 创建kubelet bootstrapping kubeconfig

export KUBE_APISERVER="https://$APISERVER:6443"

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=bootstrap.kubeconfig

# 设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

# 设置默认上下文

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

#----------------------

# 创建kube-proxy kubeconfig文件

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy \

--client-certificate=$SSL_DIR/kube-proxy.pem \

--client-key=$SSL_DIR/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

##注意:BOOTSTRAP_TOKEN这个变量是存放刚刚生成token.csv验证文件中的密钥

##这文件存放了验证apisever的验证信息,这样其它组件才有权限访问apiserve

执行脚本

chmod +x kubeconfig.sh

./kubeconfig.sh 192.168.0.141 /k8s/cert/k8s-cert/

## 第一位参数:为master节点的IP

## 第二位参数:生成k8s证书的目录

拷贝生文件到node节点上

cd /k8s/cert/k8s-cert

scp bootstrap.kubeconfig kube-proxy.kubeconfig [email protected]:/usr/local/kubernetes/conf/

scp bootstrap.kubeconfig kube-proxy.kubeconfig [email protected]:/usr/local/kubernetes/conf/

cd /k8s/soft/kubernetes/server/bin

scp kubelet kube-proxy [email protected]:/usr/local/kubernetes/bin/

scp kubelet kube-proxy [email protected]:/usr/local/kubernetes/bin/

# 以下操作在node节点192.168.0.142操作

# 部署kubelet

# 创建目录

mkdir -p /usr/local/kubernetes/{bin,conf,ssl,logs}

# 编写部署脚本

cd /k8s

vim kubelet.sh

#!/bin/bash

NODE_ADDRESS=$1

DNS_SERVER_IP=${2:-"10.0.0.2"}

cat <<EOF >/usr/local/kubernetes/conf/kubelet

KUBELET_OPTS="--logtostderr=false \\

--log-dir=/usr/local/kubernetes/logs \\

--v=4 \\

--hostname-override=${NODE_ADDRESS} \\

--kubeconfig=/usr/local/kubernetes/conf/kubelet.kubeconfig \\

--bootstrap-kubeconfig=/usr/local/kubernetes/conf/bootstrap.kubeconfig \\

--config=/usr/local/kubernetes/conf/kubelet.config \\

--cert-dir=//usr/local/kubernetes/ssl \\

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

EOF

cat <<EOF >/usr/local/kubernetes/conf/kubelet.config

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: ${NODE_ADDRESS}

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS:

- ${DNS_SERVER_IP}

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: true

EOF

cat <<EOF >/usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=/usr/local/kubernetes/conf/kubelet

ExecStart=/usr/local/kubernetes/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kubelet

systemctl restart kubelet

# 执行脚本

chmod +x kubelet.sh

./kubelet.sh 192.168.0.142

##当前node节点的IP

# 启动kubelet

systemctl daemon-reload

systemctl enable kubelet

systemctl start kubelet

# 节点授权

到Master节点给node192.168.0.142节点授权

kubectl get csr

结果:

NAME AGE REQUESTOR CONDITION

node-csr-In6C6aeHhDjMbourTBOFiWvji-ROo7njqhlDBKUeJl8 28h kubelet-bootstrap Pending

##查看密钥值

kubectl certificate approve node-csr-In6C6aeHhDjMbourTBOFiWvji-ROo7njqhlDBKUeJl8

结果:

certificatesigningrequest.certificates.k8s.io/node-csr-In6C6aeHhDjMbourTBOFiWvji-ROo7njqhlDBKUeJl8 approved

##通过命令给密钥值加权

kubectl get node

结果:

NAME STATUS ROLES AGE VERSION

192.168.0.142 Ready <none> 110m v1.13.4

##查看Node节点

# 部署kube-proxy组件

# 编写部署脚本

cd /k8s

vim proxy.sh

#!/bin/bash

NODE_ADDRESS=$1

cat <<EOF >/usr/local/kubernetes/conf/kube-proxy

KUBE_PROXY_OPTS="--logtostderr=false \\

--log-dir=/usr/local/kubernetes/logs \\

--v=4 \\

--hostname-override=${NODE_ADDRESS} \\

--cluster-cidr=10.0.0.0/24 \\

--proxy-mode=ipvs \\

--kubeconfig=/usr/local/kubernetes/conf/kube-proxy.kubeconfig"

EOF

cat <<EOF >/usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=-/usr/local/kubernetes/conf/kube-proxy

ExecStart=/usr/local/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-proxy

systemctl restart kube-proxy

# 执行脚本

chmod +x proxy.sh

./proxy.sh 192.168.0.142

##当前node节点的IP

# 启动proxy

systemctl daemon-reload

systemctl enable kube-proxy

systemctl start kube-proxy

# 发送配置文件目录到另一个Node节点上

scp -r /usr/local/kubernetes/ [email protected]:/usr/local/

cd /usr/lib/systemd/system/

scp kubelet.service kube-proxy.service [email protected]:/usr/lib/systemd/system/

# 以下操作在node节点192.168.0.143上操作

# 删除证书

rm -rf /usr/local/kubernetes/ssl/*

修改配置文件

cd /usr/local/kubernetes/conf

需要修改的配置文件有以下几个

kubelet

kubelet.config

kube-proxy

##将这些配置文件中的192.168.0.142换成192.168.0.143

启动

systemctl daemon-reload

systemctl enable kubelet

systemctl start kubelet

systemctl enable kube-proxy

systemctl start kube-proxy

# 节点授权

到Master节点给node192.168.0.143节点授权

kubectl get csr

结果:

NAME AGE REQUESTOR CONDITION

node-csr-In6C6aeHhDjMbourTBOFiWvji-ROo7njqhlDBKUeJl8 28h kubelet-bootstrap Approved,Issued

node-csr-bv__TEcpxKxCGUULU-5ElHHwgTXRPdxrI7zy8nxPmog 39s kubelet-bootstrap Pending

##查看密钥值,其中有一个值是192.168.0.142的

kubectl certificate approve node-csr-bv__TEcpxKxCGUULU-5ElHHwgTXRPdxrI7zy8nxPmog

结果:

certificatesigningrequest.certificates.k8s.io/node-csr-bv__TEcpxKxCGUULU-5ElHHwgTXRPdxrI7zy8nxPmog approved

##通过命令给密钥值加权

kubectl get node

结果:

NAME STATUS ROLES AGE VERSION

192.168.0.142 Ready <none> 110m v1.13.4

192.168.0.143 Ready <none> 17s v1.13.4

##查看Node节点

# 部署一个测试示例

# 创建一个nginx的服务容器

kubectl create deployment nginx --image=nginx

# 查看创建的服务容器

kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-5c7588df-45gzq 1/1 Running 0 6m45s

## 第一次启动Nginx, 可能会慢点,因为系统需要拉镜像

## 1/1:启动成功

## 0/1:启动中

# 扩容nginx服务容器

kubectl scale deployment nginx --replicas=3

## 指定nginx容器数量为三个

# 在查看创建的服务容器

kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-5c7588df-45gzq 1/1 Running 0 6m45s

nginx-5c7588df-56gwb 1/1 Running 0 3m11s

nginx-5c7588df-hvjlf 1/1 Running 0 3m11s

# 查看容器分布在那些节点上

kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-5c7588df-45gzq 1/1 Running 0 6m59s 172.17.71.2 192.168.0.142 <none> <none>

nginx-5c7588df-56gwb 1/1 Running 0 3m25s 172.17.71.3 192.168.0.142 <none> <none>

nginx-5c7588df-hvjlf 1/1 Running 0 3m25s 172.17.82.2 192.168.0.143 <none> <none>

# 暴露node88端口给外部连接

kubectl expose deployment nginx --port=88 --target-port=80 --type=NodePort

## 暴露的端口是内部连接的,外部连接是暴露的随机端口

# 查看以暴露的端口并访问

kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.0.0.1 <none> 443/TCP 4h45m

nginx NodePort 10.0.0.175 <none> 88:32707/TCP 23s

##访问随意node节点IP加随机端口(46771)

# web访问

# 可以通过二个node节点的IP加上32707端口

# 192.168.0.142:32707

# 192.168.0.143:32707

# 授权用户可以查看日志的权限

kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=system:anonymous

# 查看创建的服务容器并查看日志

kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-5c7588df-45gzq 1/1 Running 0 6m45s

nginx-5c7588df-56gwb 1/1 Running 0 3m11s

nginx-5c7588df-hvjlf 1/1 Running 0 3m11s

===================================================================

kubectl logs nginx-5c7588df-hvjlf

172.17.71.0 - - [20/Apr/2020:07:47:26 +0000] "GET / HTTP/1.1" 200 612 "-" "curl/7.29.0" "-"

172.17.71.0 - - [20/Apr/2020:07:47:52 +0000] "GET / HTTP/1.1" 200 612 "-" "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/76.0.3809.100 Safari/537.36" "-"

2020/04/20 07:47:52 [error] 6#6: *2 open() "/usr/share/nginx/html/favicon.ico" failed (2: No such file or directory), client: 172.17.71.0, server: localhost, request: "GET /favicon.ico HTTP/1.1", host: "192.168.0.142:32707", referrer: "http://192.168.0.142:32707/"

172.17.71.0 - - [20/Apr/2020:07:47:52 +0000] "GET /favicon.ico HTTP/1.1" 404 556 "http://192.168.0.142:32707/" "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/76.0.3809.100 Safari/537.36" "-"

172.17.82.1 - - [20/Apr/2020:07:47:56 +0000] "GET / HTTP/1.1" 200 612 "-" "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/76.0.3809.100 Safari/537.36" "-"

172.17.82.1 - - [20/Apr/2020:07:47:56 +0000] "GET /favicon.ico HTTP/1.1" 404 556 "http://192.168.0.143:32707/" "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/76.0.3809.100 Safari/537.36" "-"

2020/04/20 07:47:56 [error] 6#6: *3 open() "/usr/share/nginx/html/favicon.ico" failed (2: No such file or directory), client: 172.17.82.1, server: localhost, request: "GET /favicon.ico HTTP/1.1", host: "192.168.0.143:32707", referrer: "http://192.168.0.143:32707/"

# 高可用负载kubemetes集群

基于上面的kubemetes集群,在添加二台机器

- 192.168.0.41

- 新的maste节点

- 4G,2P

- 新的maste节点

- 192.168.0.42

- nginx节点跟keepalived节点

- 2G,1P

- nginx节点跟keepalived节点

- 192.168.0.43

- nginx节点跟keepalived节点

- 2G,1P

- nginx节点跟keepalived节点

# 新建maste节点

# 获取旧maste节点的数据

请到192.168.0.141机器上执行

scp -r /usr/local/etcd/ssl/ [email protected]:/usr/local/etcd/ssl/

scp -r /usr/local/kubernetes/ [email protected]:/usr/local/

scp /usr/lib/systemd/system/{kube-apiserver,kube-controller-manager,kube-scheduler}.service [email protected]:/usr/lib/systemd/system

# 创建kubectl命令文件软链接

ln -s /usr/local/kubernetes/bin/kubectl /usr/bin/

# 修改kube-apiserver的配置

vim /usr/local/kubernetes/conf/kube-apiserver

--bind-address=192.168.0.41 \

--advertise-address=192.168.0.41 \

## 把这二行的IP修改为当前新节点的IP

# 启动新节点的三个组件

systemctl daemon-reload

systemctl enable kube-apiserver

systemctl enable kube-controller-manager

systemctl enable kube-scheduler

systemctl start kube-apiserver

systemctl start kube-controller-manager

systemctl start kube-scheduler

## 先启动kube-apiserver,要保证kube-apiserver启动后才能启动其他二个节点,因为其他二个节点都是依赖kube-apiserver的8080端口

# 查看当前集群节点的状态

kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-1 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

# 查看运行的容器

kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-5c7588df-45gzq 1/1 Running 0 156m

nginx-5c7588df-56gwb 1/1 Running 0 153m

nginx-5c7588df-hvjlf 1/1 Running 0 153m

# 在192.168.0.42搭建nginx跟keepalived

# 安装nginx跟keepalived

yum -y install nginx keepalived

# 修改nginx配置

vim /etc/nginx/nginx.conf

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.0.141:6443;

server 192.168.0.41:6443;

}

server {

listen 6443;

proxy_pass k8s-apiserver;

}

}

## 在http{}头上添加stream{},stream是基于4层的负载

## access_log:是日志输出

## server{}:是监听6443端口,如果6443端口有数据就会调用k8s-apiserver模块

## upstream{}:定义负载的模块

# 启动nginx

systemctl start nginx

# 创建nginx心跳检测脚本

vim /etc/nginx/check_nginx.sh

#!/bin/bash

count=$(ps -ef |grep nginx |egrep -cv "grep|$$")

if [ "$count" = "0" ];then

systemctl stop keepalived

fi

## 让keepalived自行调用

# 增加nginx心跳检测脚本可执行权限

chmod +x /etc/nginx/check_nginx.sh

# 修改keepalived配置

vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

[email protected]

[email protected]

[email protected]

}

notification_email_from [email protected]

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

}

vrrp_script check_nginx {

script "/etc/nginx/check_nginx.sh"

interval 1 ## 检测时间间隔

weight -20 ## 如果条件成立,权重-20

}

vrrp_instance VI_1 {

state MASTER

interface eth0 ## 网卡名,一定要跟当前机器的网卡名一致

virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的

priority 100 # 优先级,备服务器设置 90

advert_int 1 # 指定VRRP 心跳包通告间隔时间,默认1秒

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.0.40/24

}

track_script {

check_nginx

}

}

## 把文件清空,写入上面的配置

# 启动keepalived

systemctl start keepalived

# 查看IP

ip add

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:50:56:39:24:d1 brd ff:ff:ff:ff:ff:ff

inet 192.168.0.43/24 brd 192.168.0.255 scope global noprefixroute eth0

valid_lft forever preferred_lft forever

inet 192.168.0.40/24 scope global secondary eth0

valid_lft forever preferred_lft forever

inet6 fe80::7afa:61f2:9555:6c8e/64 scope link noprefixroute

valid_lft forever preferred_lft forever

# 移动配置文件到192.168.0.43上

scp /etc/keepalived/keepalived.conf [email protected]:/root

scp /etc/nginx/check_nginx.sh [email protected]:/root

# 在192.168.0.43搭建nginx跟keepalived

# 安装nginx跟keepalived

yum -y install nginx keepalived

# 修改nginx配置

vim /etc/nginx/nginx.conf

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.0.141:6443;

server 192.168.0.41:6443;

}

server {

listen 6443;

proxy_pass k8s-apiserver;

}

}

## 在http{}头上添加stream{},stream是基于4层的负载

## access_log:是日志输出

## server{}:是监听6443端口,如果6443端口有数据就会调用k8s-apiserver模块

## upstream{}:定义负载的模块

# 启动nginx

systemctl start nginx

# 移动文件

mv /root/check_nginx.sh /etc/nginx/

mv /root/keepalived.conf /etc/keepalived/

# 增加nginx心跳检测脚本可执行权限

chmod +x /etc/nginx/check_nginx.sh

# 修改keepalived配置

vim /etc/keepalived/keepalived.conf

state BACKUP

priority 90

## 修改这二项

# 启动keepalived

systemctl start keepalived

# 查看IP

ip add

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:50:56:39:24:d1 brd ff:ff:ff:ff:ff:ff

inet 192.168.0.43/24 brd 192.168.0.255 scope global noprefixroute eth0

valid_lft forever preferred_lft forever

inet 192.168.0.40/24 scope global secondary eth0

valid_lft forever preferred_lft forever

inet6 fe80::7afa:61f2:9555:6c8e/64 scope link noprefixroute

valid_lft forever preferred_lft forever

# 测试

## 可以关闭192.168.0.42的nginx,看看192.168.0.40的VIP会不会移动到192.168.0.43的机器上

# 配置node集群访问IP

把node节点需要访问maste节点的IP改成上面配置好的虚拟IP

以下操作在二个node节点都要

# 修改node节点的配置文件

修改有三个文件,bootstrap.kubeconfig,kubelet.kubeconfig,kube-proxy.kubeconfig

## 把这三个文件的IP修改成虚拟IP,端口不用改,这样子node节点访问maste节点就会先经过nginx负载转发

# 重启服务

systemctl restart kubelet

systemctl restart kube-proxy

# 故障

注意:在本人实践文章的可行时,报了错,最终找到原因:是因为上面虚拟IP192.168.0.40并没有加入k8s证书中,所以node不认同这个IP

解决方案:如果是按上面的IP来部署的话,可以把nginx跟keepalived节点之一的192.168.0.42修改一下IP,并把192.168.0.42改为虚拟IP,因为在上面k8s认证的时候就只写了三个IP,其中二个都是maste节点,还有一个就是192.168.0.42这个IP

上面k8s证书中的IP:"192.168.0.141","192.168.0.41","192.168.0.42"

如果不是按上面的配置部署,那么查一下还有没有k8s证书中剩余可使用的IP,如果没有就只能重新生成证书并对老证书进行替换

目前博客已在博文中把192.168.0.40这个IP加入证书

# 部署kubernetes的web界面

目前我就只在192.168.0.141这台机部署,如果需要负载,可以在192.168.0.41上在部署一遍,然后用nginx做负载就行了,目前就使用简单的单机web界面

# 创建目录并进入

mkdir /k8s/web

cd /k8s/web

# 生成web证书

# 编写证书脚本

vim dashboard-cert.sh

cat > dashboard-csr.json <<EOF

{

"CN": "Dashboard",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

K8S_CA=$1

cfssl gencert -ca=$K8S_CA/ca.pem -ca-key=$K8S_CA/ca-key.pem -config=$K8S_CA/ca-config.json -profile=kubernetes dashboard-csr.json | cfssljson -bare dashboard

kubectl delete secret kubernetes-dashboard-certs -n kube-system

kubectl create secret generic kubernetes-dashboard-certs --from-file=./ -n kube-system

# 执行脚本

bash dashboard-cert.sh /k8s/cert/k8s-cert

2020/04/21 18:04:19 [INFO] generate received request

2020/04/21 18:04:19 [INFO] received CSR

2020/04/21 18:04:19 [INFO] generating key: rsa-2048

2020/04/21 18:04:19 [INFO] encoded CSR

2020/04/21 18:04:19 [INFO] signed certificate with serial number 174470471201869135810243443026966519858501030342

2020/04/21 18:04:19 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

secret "kubernetes-dashboard-certs" deleted

secret/kubernetes-dashboard-certs created

## 脚本会自动的替换k8s原先证书的位置

# 拉取git

安装web界面需要的yaml文件都在git上

地址:https://github.com/kubernetes/kubernetes/tree/master/cluster/addons/dashboard

我这边是事先下载好

需要的文件:dashboard-configmap.yaml dashboard-controller.yaml dashboard-rbac.yaml dashboard-secret.yaml dashboard-service.yaml

# 部署不需要修改三个文件

kubectl apply -f dashboard-rbac.yaml

kubectl apply -f dashboard-configmap.yaml

kubectl apply -f dashboard-secret.yaml

# 部署需要修改的二个文件

# dashboard-controller.yaml

需要修改下载镜像的地址,因为默认地址是官方的

mage: registry.cn-hangzhou.aliyuncs.com/google_containers/kubernetes-dashboard-amd64:v1.10.0

## 修改这行配置,把镜像地址修改成阿里云的镜像仓库

args:

# PLATFORM-SPECIFIC ARGS HERE

- --auto-generate-certificates

- --tls-key-file=dashboard-key.pem

- --tls-cert-file=dashboard.pe

## 增加:- --tls-key-file=dashboard-key.pem,- --tls-cert-file=dashboard.pe这二行配置,让web界面使用自己的生成的证书

# 部署

kubectl apply -f dashboard-controller.yaml

# 查看部署结果

kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

kubernetes-dashboard-785f8ff65c-9h57l 1/1 Running 0 93s

# dashboard-service.yaml

只需要修改暴露端口

type: NodePort

nodePort: 30001

# 部署

kubectl apply -f dashboard-service.yaml

# 查看暴露的端口跟IP

kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

kubernetes-dashboard-785f8ff65c-9h57l 1/1 Running 0 7m52s 172.17.82.3 192.168.0.143 <none> <none>

kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes-dashboard NodePort 10.0.0.219 <none> 443:30001/TCP 73s

## 暴露的IP为:192.168.0.143

## 暴露的端口为:30001

# 访问web界面

https://192.168.0.143:30001/

## 需要https访问,会报证书过期,直接接受风险就行

# 创建登录令牌

登录有二种登录方式,我们就选令牌的方式

# 编写创建令牌的文件

默认创建完的用户是管理员

vim k8s-admin.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: dashboard-admin

namespace: kube-system

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: dashboard-admin

subjects:

- kind: ServiceAccount

name: dashboard-admin

namespace: kube-system

roleRef:

kind: ClusterRole

name: cluster-admin

apiGroup: rbac.authorization.k8s.io

# 部署

kubectl apply -f k8s-admin.yaml

# 查看令牌

kubectl get secret -n kube-system

NAME TYPE DATA AGE

dashboard-admin-token-5txcb kubernetes.io/service-account-token 3 39s

default-token-bctts kubernetes.io/service-account-token 3 31h

kubernetes-dashboard-certs Opaque 0 19m

kubernetes-dashboard-key-holder Opaque 2 19m

kubernetes-dashboard-token-t9kps kubernetes.io/service-account-token 3 18m

## 获取NAME值:dashboard-admin-token-5txcb

kubectl describe secret dashboard-admin-token-5txcb -n kube-system

Name: dashboard-admin-token-5txcb

Namespace: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name: dashboard-admin

kubernetes.io/service-account.uid: 74de8530-83b5-11ea-ad90-0050563b26da

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1359 bytes

namespace: 11 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tNXR4Y2IiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiNzRkZTg1MzAtODNiNS0xMWVhLWFkOTAtMDA1MDU2M2IyNmRhIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmUtc3lzdGVtOmRhc2hib2FyZC1hZG1pbiJ9.qn8Cb5BtNTaVYQ2q2Im61BOnHPD6LwcmGTG95Reei5gjU_P1aI6tosg9LN0Nm3ANxZxHDPJJ_wXQtqgMv4a9FILs72DcnNl5heJq3U1iUXPwbxVG-6q4cwLnQ5FPf23DhZhX_UJQaGpGYR81LpaR7-7ZJlmgmfD97YioWMdxeli4oNHMFEcTIkCR02SXqGcYjBTgAgxDuDKJJvQfCGumHp_V_Z2eP8AnRy-Snww5FHi0Ibx4p6y_AyQVHrnUccNTK-Glq6Gp3EXM7BgCL8Wykc2r8hpP5RMJ9qnbJ0jvOL_VtEChted_2yXCcQMFfg2Gbpco3mo8tApz_e0_nW_Sqg

## 获取令牌值:eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tNXR4Y2IiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiNzRkZTg1MzAtODNiNS0xMWVhLWFkOTAtMDA1MDU2M2IyNmRhIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmUtc3lzdGVtOmRhc2hib2FyZC1hZG1pbiJ9.qn8Cb5BtNTaVYQ2q2Im61BOnHPD6LwcmGTG95Reei5gjU_P1aI6tosg9LN0Nm3ANxZxHDPJJ_wXQtqgMv4a9FILs72DcnNl5heJq3U1iUXPwbxVG-6q4cwLnQ5FPf23DhZhX_UJQaGpGYR81LpaR7-7ZJlmgmfD97YioWMdxeli4oNHMFEcTIkCR02SXqGcYjBTgAgxDuDKJJvQfCGumHp_V_Z2eP8AnRy-Snww5FHi0Ibx4p6y_AyQVHrnUccNTK-Glq6Gp3EXM7BgCL8Wykc2r8hpP5RMJ9qnbJ0jvOL_VtEChted_2yXCcQMFfg2Gbpco3mo8tApz_e0_nW_Sqg